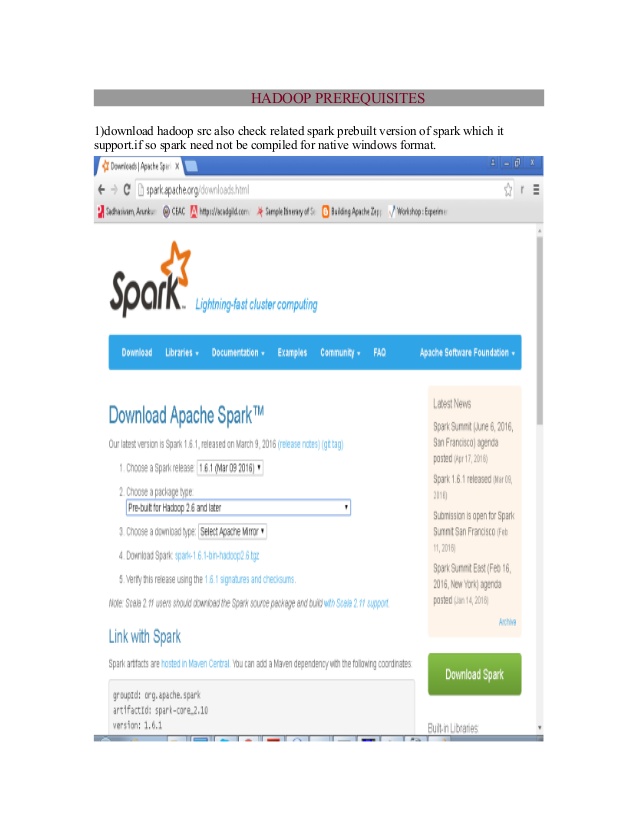

Java.io.IOException: Could not locate executable null\bin\winutils.exe in the Hadoop binaries.Īt .Shell.getQualifiedBinPath (Shell.java: 379 )Īt .Shell.getWinUtilsPath (Shell.java: 394 )Īt .Shell. For convenience you also need to add D:\spark-2.4.4-bin-hadoop2.7\bin in the path of your Windows account (restart PowerShell after) and confirm it’s all good with:ġ9 / 11 / 15 17: 15: 37 ERROR Shell: Failed to locate the winutils binary in the hadoop binary path You must set SPARK_HOME environment variable to the directory you have unzip Spark. I have started by downloading it on official web site: spark_installation01Īnd unzip it in default D:\spark-2.4.4-bin-hadoop2.7 directory. This blog post is all about this: building a productive Scala/PySpark development environment on your Windows desktop and access Hive tables of an Hadoop cluster.īefore going in small details I have first tried to make raw Spark installation working on my Windows machine.

Winutils Exe Hadoop S software#

But then difficulties rise as this software lack a remote SSH edition… So it works well for pure Scala script but if you need to access you Hive table you have to work a bit to configure it… A bit cumbersome…Īs said above Intellij IDEA from JetBrains is a very good Scala language editor and the community edition does a pretty decent job. This works if you do Python (even if a PySpark plugin is not available in VSCode) but if you do Scala you also have to run an sbt command, each time, to compile you Scala program. In clear we edit through SSH files located directly on the server and then using a shell terminal (MobaXterm, Putty, …) we are able to submit scripts directly onto our Hadoop cluster. We, at last, improved a bit the editor part by installing VSCode and editing our file with SSH FS plugins. By clever editor I mean one having syntax completion, suggesting to import packages when using a procedures, suggesting unused variables if any and so on… Small teasing: Intellij IDEA from JetBrains is a serious contenders nowadays, apparently much better than Eclipse that is more or less dying… Obviously what new generation developers want is a clever editor running on their Windows (Linux ?) desktop.

Winutils Exe Hadoop S code#

Of course it makes easy the testing of your code as you can instantly submit it but in worst case your editor will be vi. Developing directly on an Hadoop cluster is not the best development environment you would dream for yourself.